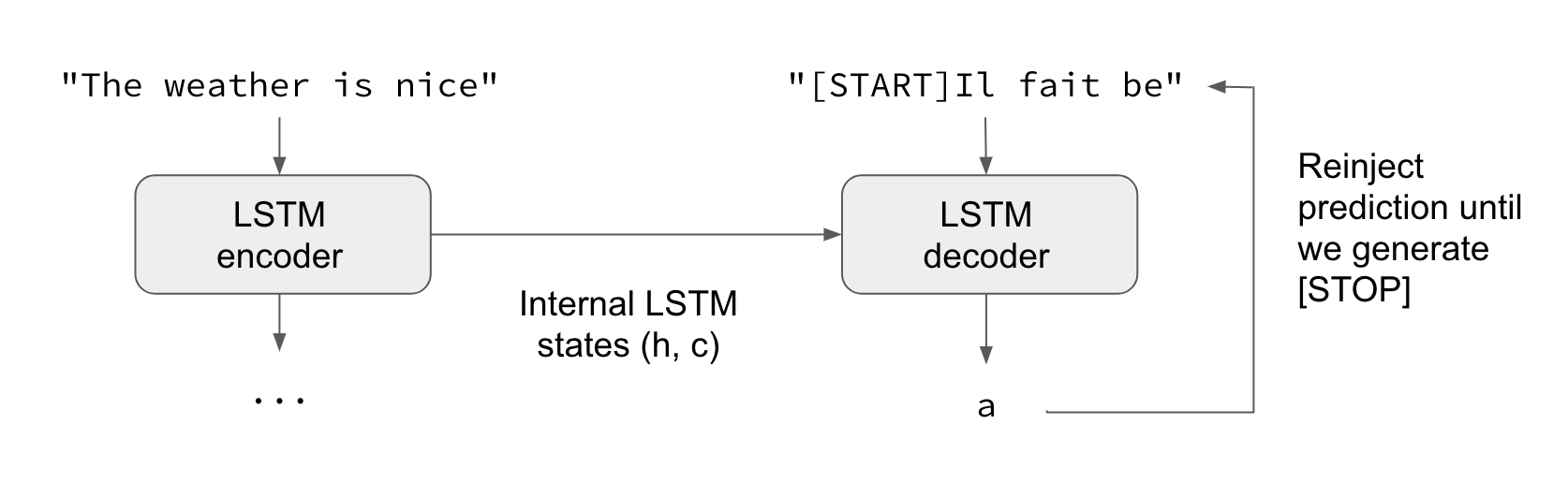

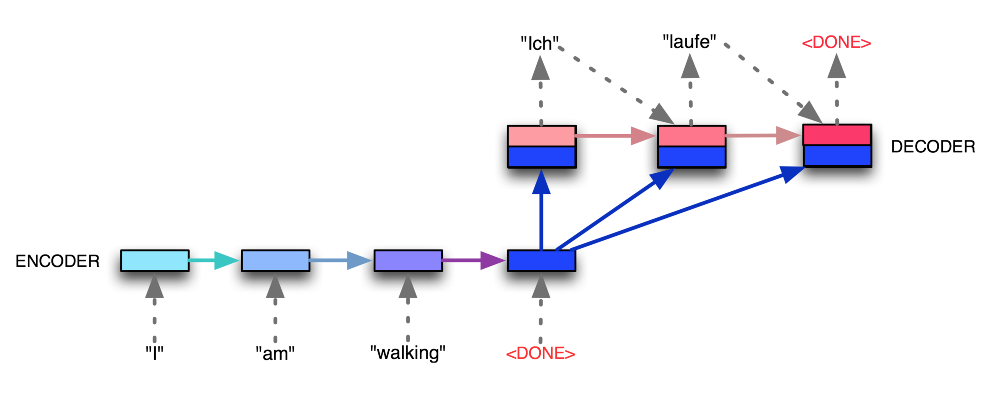

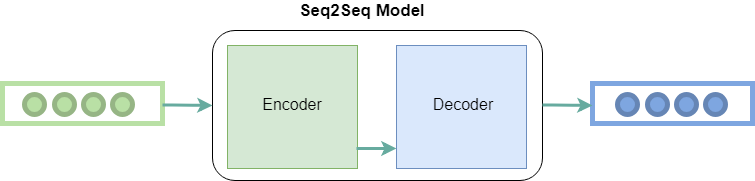

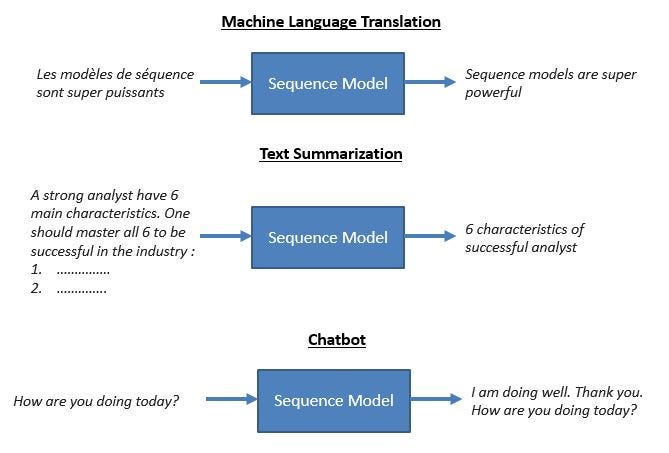

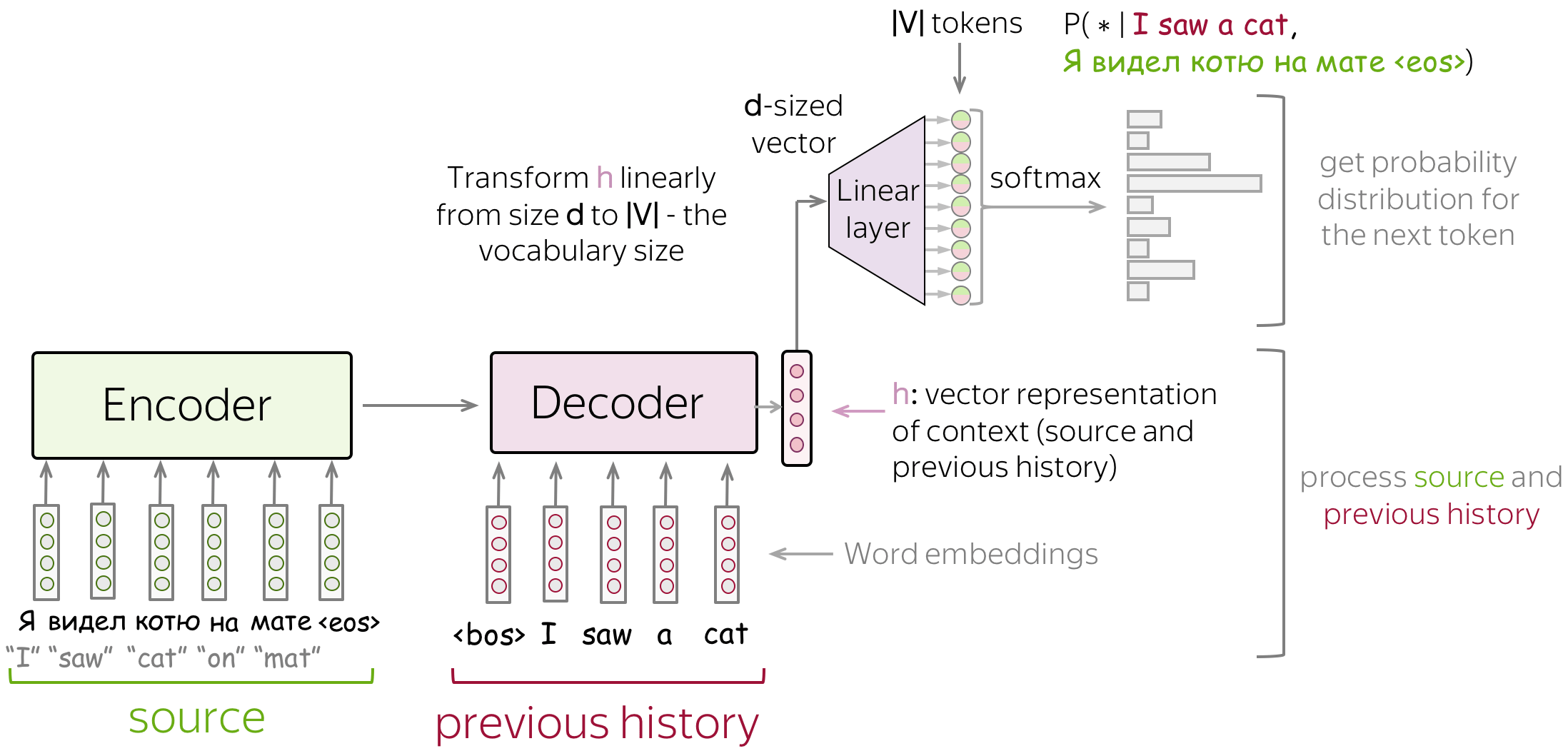

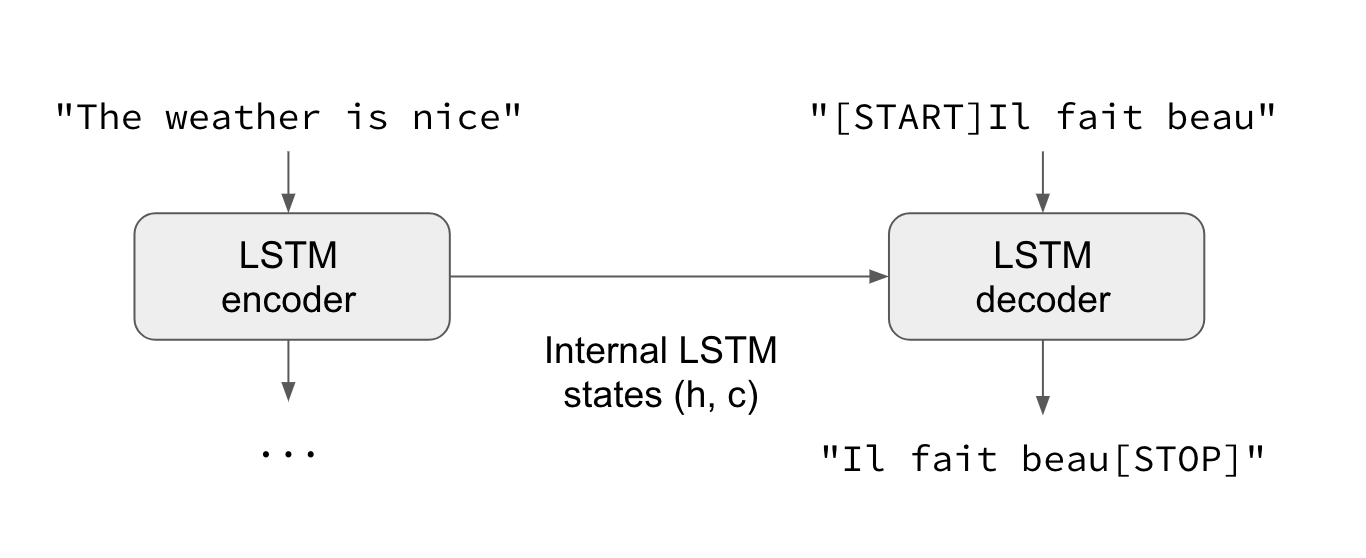

Attention — Seq2Seq Models. Sequence-to-sequence (abrv. Seq2Seq)… | by Pranay Dugar | Towards Data Science

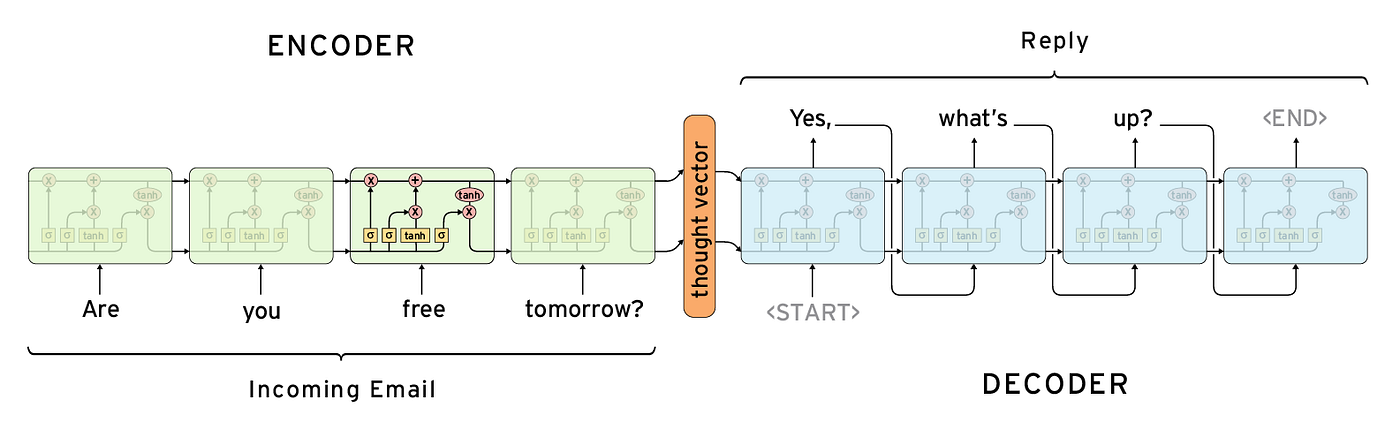

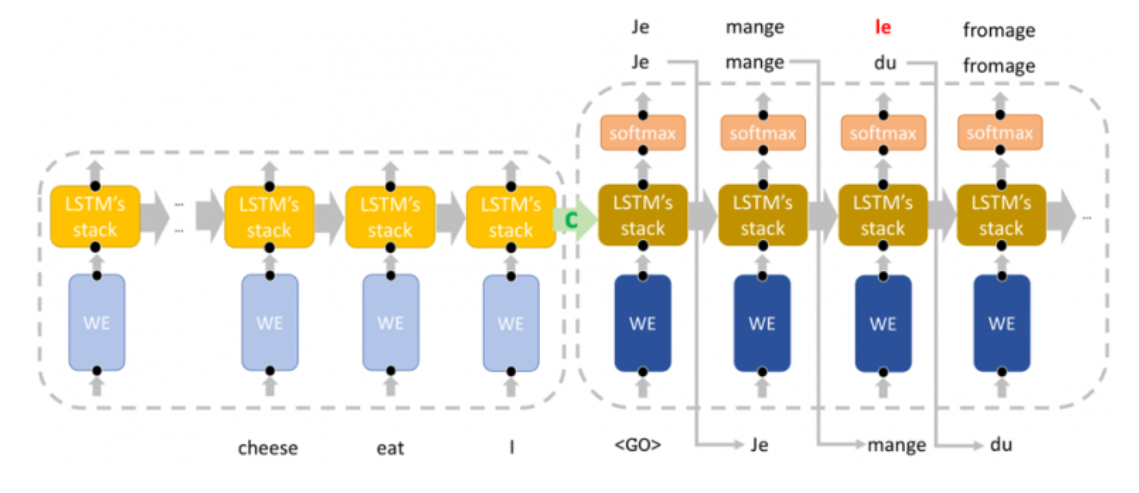

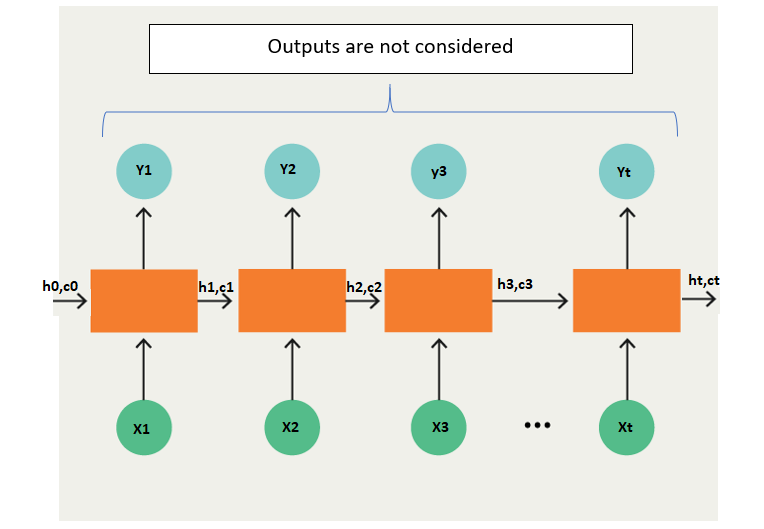

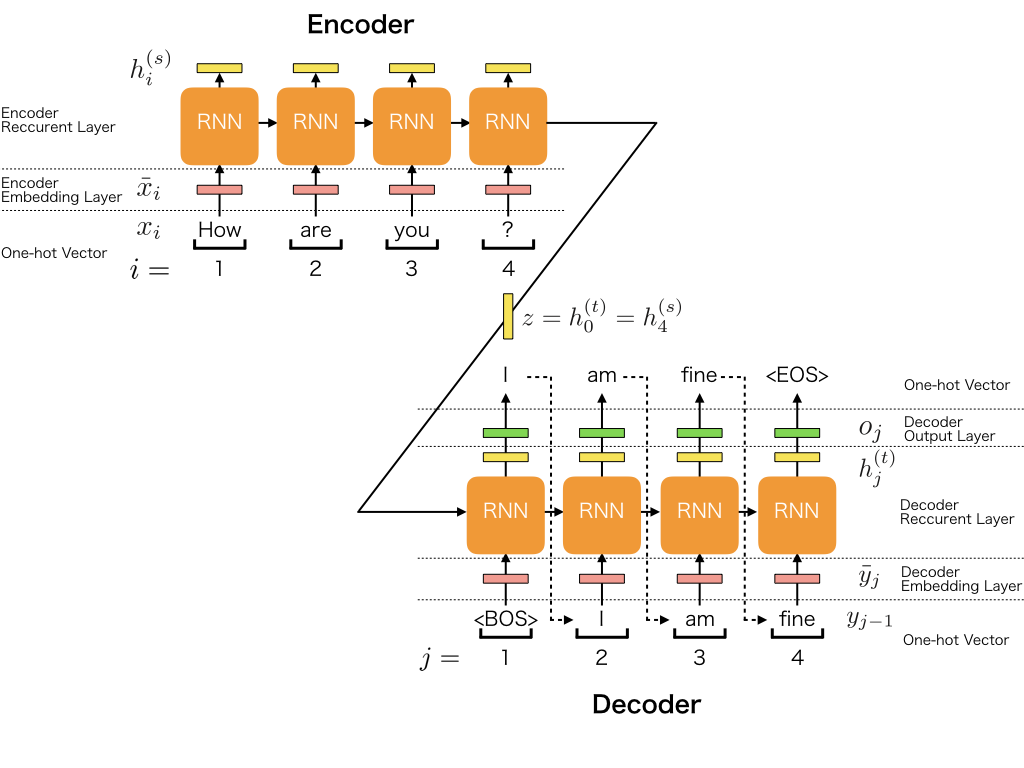

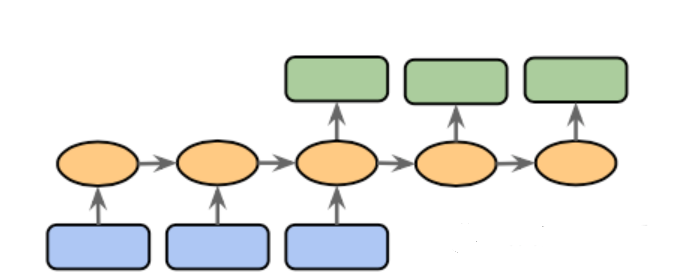

Encoder-Decoder Sequence to Sequence(Seq2Seq) model explained by Abhilash | RNN | LSTM | Transformer - YouTube

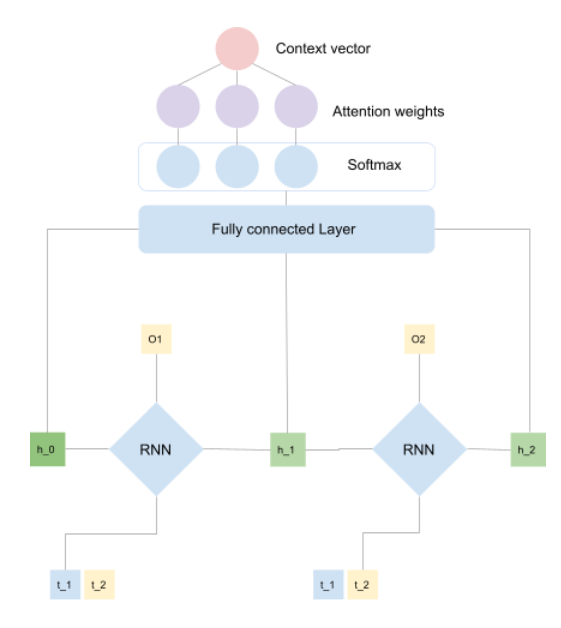

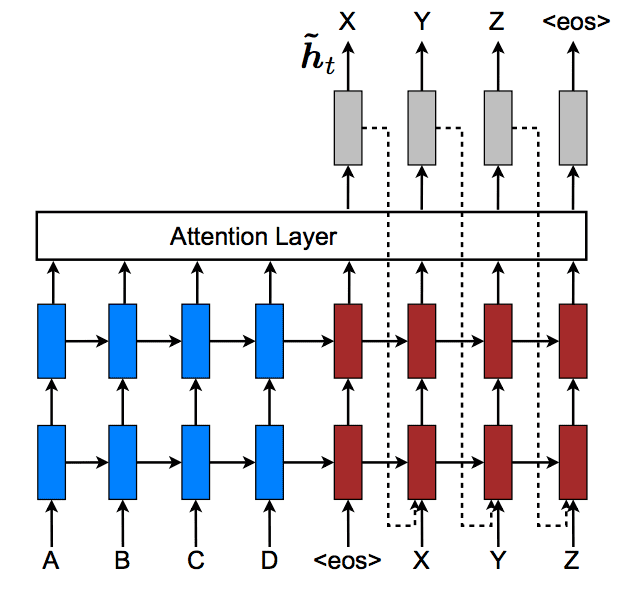

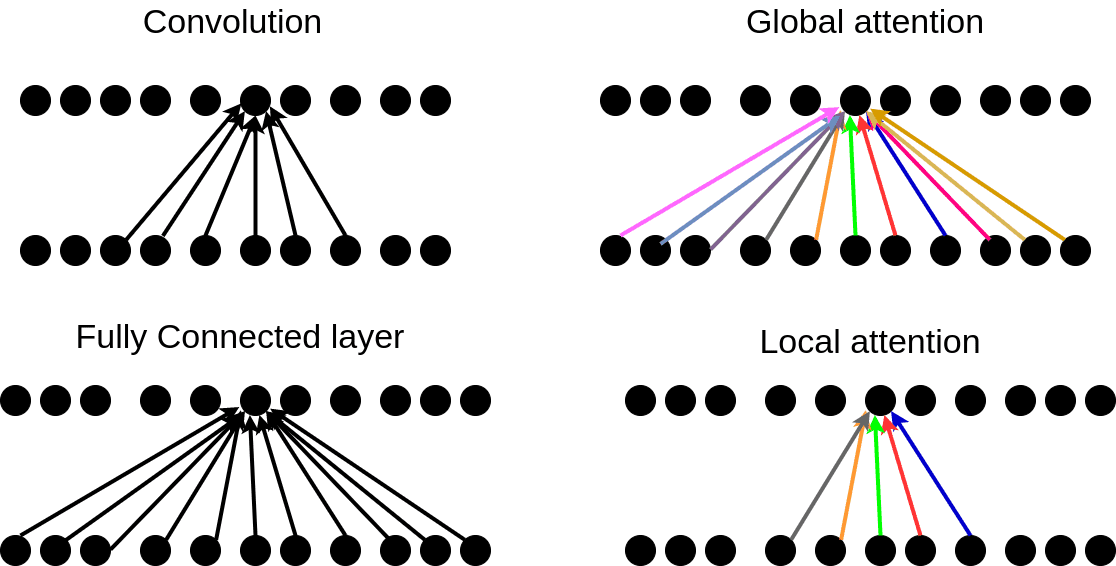

How Attention works in Deep Learning: understanding the attention mechanism in sequence models | AI Summer